Privacy and AI Governance Report

This report explores the state of AI governance in organizations and its overlap with privacy management.

Contributors:

Katharina Koerner

Principal Researcher, Technology

Jake Frazier

Senior Managing Director, Head of Information Governance, Privacy & Security

FTI Consulting

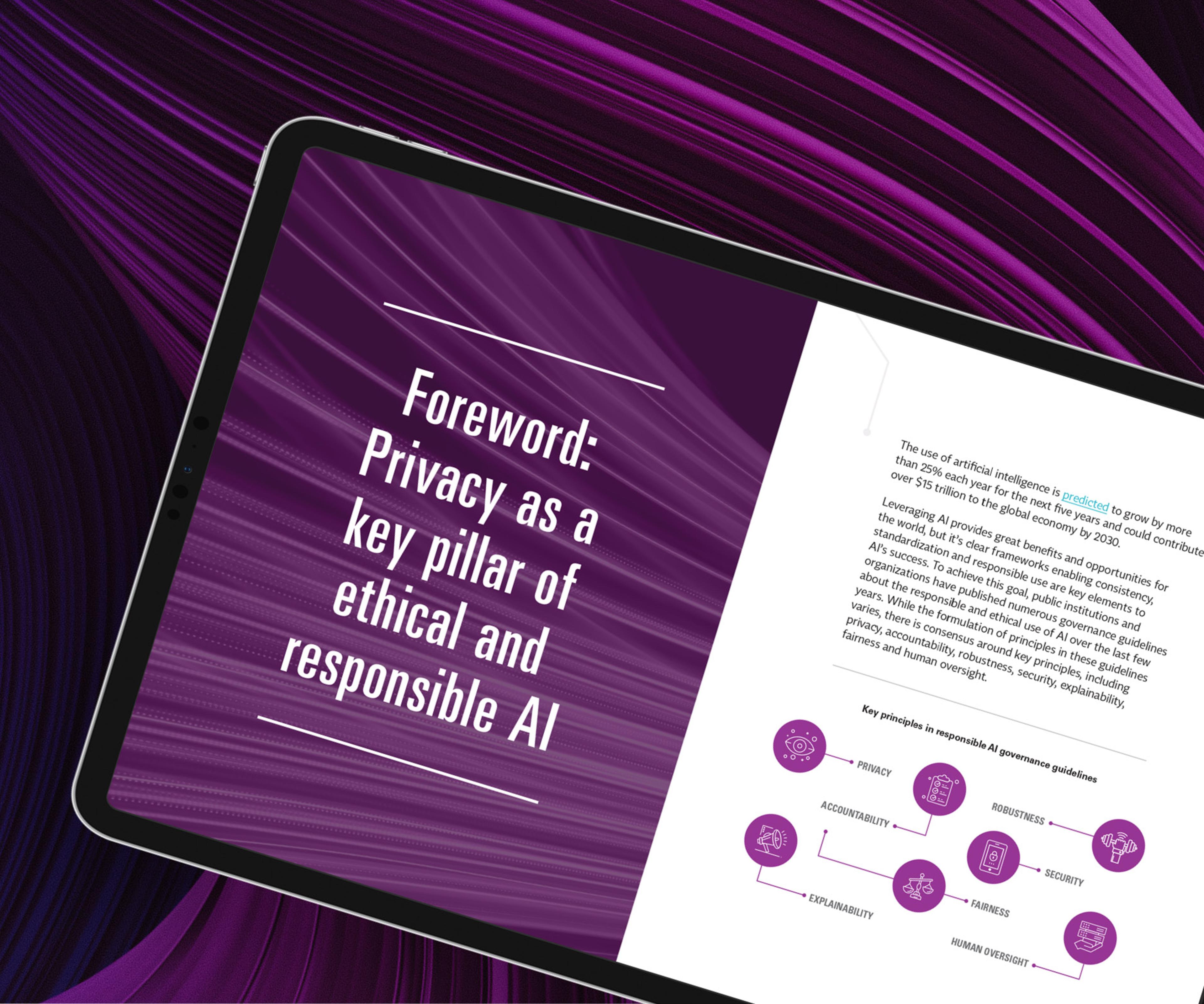

The use of artificial intelligence is predicted to grow by more than 25% each year for the next five years and could contribute over $15 trillion to the global economy by 2030.

Leveraging AI provides great benefits and opportunities for the world, but it is clear frameworks enabling consistency, standardization and responsible use are key elements to AI’s success. To achieve this goal, public institutions and organizations have published numerous governance guidelines about the responsible and ethical use of AI over the last few years. While the formulation of principles in these guidelines varies, there is consensus around key principles, including privacy, accountability, robustness, security, explainability, fairness and human oversight.

This report explores the state of AI governance in organizations and its overlap with privacy management. We focused on companies’ change in processes when striving to use AI according to responsible AI principles such as privacy, accountability, robustness, security, explainability, fairness and human oversight. This study aims to report on different approaches to governing AI in general and to explore how these nascent governance efforts intersect with existing privacy governance approaches.

The scope of the study is limited to interviews with stakeholders in organizations from across six industries in North America, Europe and Asia: technology, life sciences, telecommunication, banking, staffing and retail.

In each interview, we focused on five areas: governance, risk, processes, tools and skills. We identified where the organization stood with implementing responsible AI governance, processes, and tools and how they aligned or planned to coordinate those emerging functions and policies with existing privacy processes.

In addition, we gathered data from facilitated workshops and interactive survey sessions at the annual IAPP Leadership Retreat with 120 privacy thought leaders in July 2022. These individuals included chief privacy officers, technologists (data scientists and AI engineers), lawyers and product managers.

By reading this report, you will:

- Familiarize yourself with the overlap of privacy and ethical AI.

- Explore the state of responsible AI governance and its intersection with privacy programs in organizations.

- Understand whether privacy can support the responsibility of governing AI.

- Read about initial approaches to establishing a responsible AI function in collaboration with privacy governance.

- Learn where organizations are struggling to operationalize responsible AI.

- Inform yourself about organizations striving to find the right tools to address responsible AI in practice, and the relevance of the practical implementation of privacy-enhancing technologies in the arena.

- Get insight into how organizations deal with the demand for new skills around privacy and AI.

Key Takeaways

It’s a marathon, not a sprint!

The fast-growing importance of clear governance guidelines for the responsible use of AI leads to a turning point, with organizations going through various maturity levels on their responsible AI journey.A growing and complex risk landscape

Our analysis shows organizations are aware, with the deployment of AI systems, new risk vectors require adapting internal governance approaches to new expectations, standards and norms.

AI and privacy have a key overlap

While required in several areas of law and grounded in responsible AI principles, explainability, fairness, security and accountability are also requirements in privacy regulations. While growing efforts to manage the responsible use of AI by organizations have been documented, the impact and utilization of existing privacy programs have not been explored in detail.

Higher AI maturity shows close alignment with privacy

Organizations that clearly explain their governance models for responsible AI describe a close collaboration with privacy governance, often building upon privacy programs.

Organizations seeking tools and skills for responsible AI

Organizations are struggling to procure appropriate technical tools to address responsible AI, such as consistent bias detection in AI applications. While workforce capacity building and cultural change are in their infancy, momentum for responsible AI as an expected and necessary governance aspect is growing as organizations build products or services on AI and machine learning that processes personal data.

Contributors:

Katharina Koerner

Principal Researcher, Technology

Jake Frazier

Senior Managing Director, Head of Information Governance, Privacy & Security

FTI Consulting