This post shows just a few of the many ways in which design choices can enable different kind of privacy approaches, focusing on informing users and giving them better control.

Privacy is shaped by more than law, policy or technical capabilities. Coming from a human rights background, I have become fascinated by the link between design and privacy. I discovered as part of my previous work on Ranking Digital Rights that users are generally left in the dark about what tech companies do with their data. Moreover, users have little control over what companies collect or share about them.

Informing users and giving them real control over their personal data are key tenets of a responsible privacy approach. Design plays an important role in both, and I hope designers, in collaboration with internal and external stakeholders, can improve on current practices. Having spent the past months researching this theme, here are a few questions I have come across.

To padlock or not to padlock?

We have all been trained to watch out for the green padlock and the letters "HTTPS" in the address bar of our browsers. When a site uses HTTPS, we can verify the site’s identity and be assured that the connection between us and the site is encrypted. This encryption prevents others, such as our employer, the café where we use our computer or phone or our internet provider, from being able to read our communications.

Earlier this year, Google started putting the word "secure" next to the padlock in its Chrome desktop browser, showing its users explicitly what the padlock and HTTPS stand for. However, since sites that use HTTPS can still be malicious, some have questioned whether "secure" is really the right way to describe it.

In contrast, mobile apps, with the exception of mobile web browsers, rarely indicate if they use HTTPS. If we agree that it’s important for our digital safety to let the user experience design “help users understand the protection the software offers,” should we then also include padlock or HTTPS signifiers in mobile user interfaces?

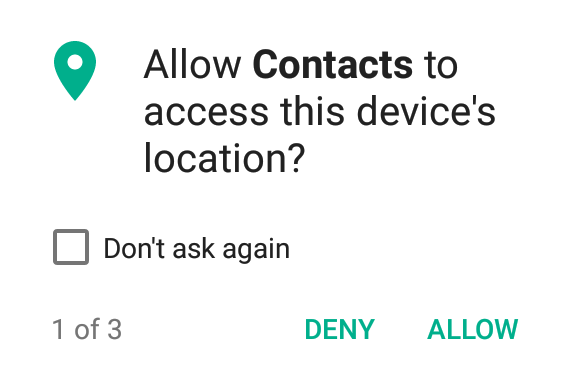

Informed and granular permission requests

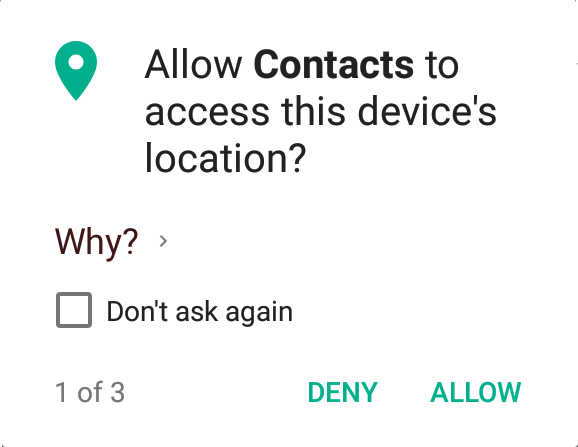

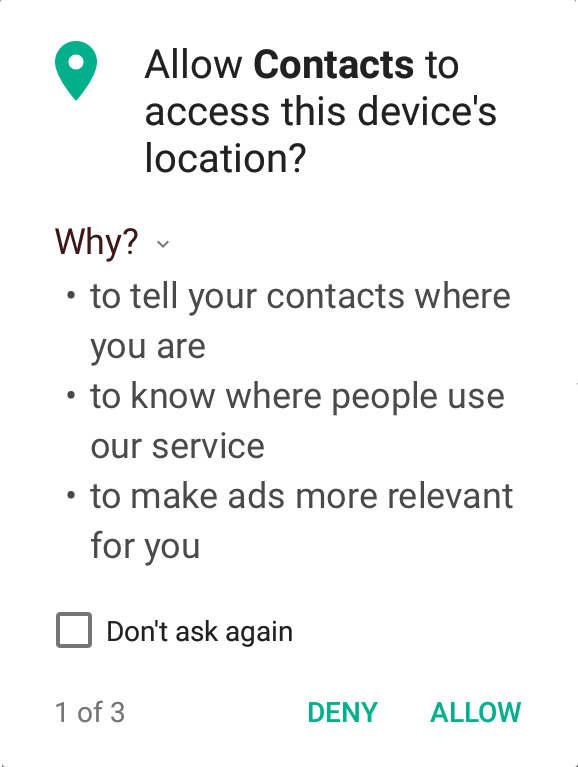

Another area where the user experience can be improved on is mobile permission requests. For example, the Contacts function of my Android phone asks to access my location information, and I don’t know why.

The permission request pop-up could be a good moment for designers to help build a trust relationship with users. By explaining why a certain permission is requested, apps can bring the often opaque and inaccessible privacy policies closer to users, making them more relevant by displaying them in direct relation to what the user is doing right then. It requires apps to consider how important a permission is, as they’ll have to clearly and briefly articulate its necessity to users. (Also, "informed consent" is the law in some places!)

On that note, a few weeks ago, I participated in a "Trust, Transparency and Control" Design Jam in Berlin. Below is a mock-up of an "Informed Permissions" concept we worked on. Can explanations such as these help to inform users?

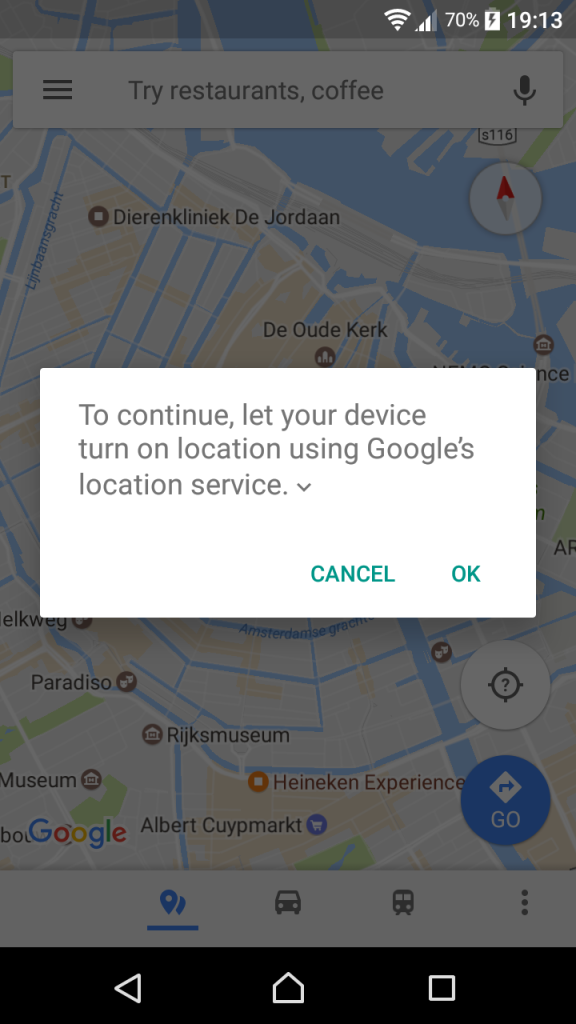

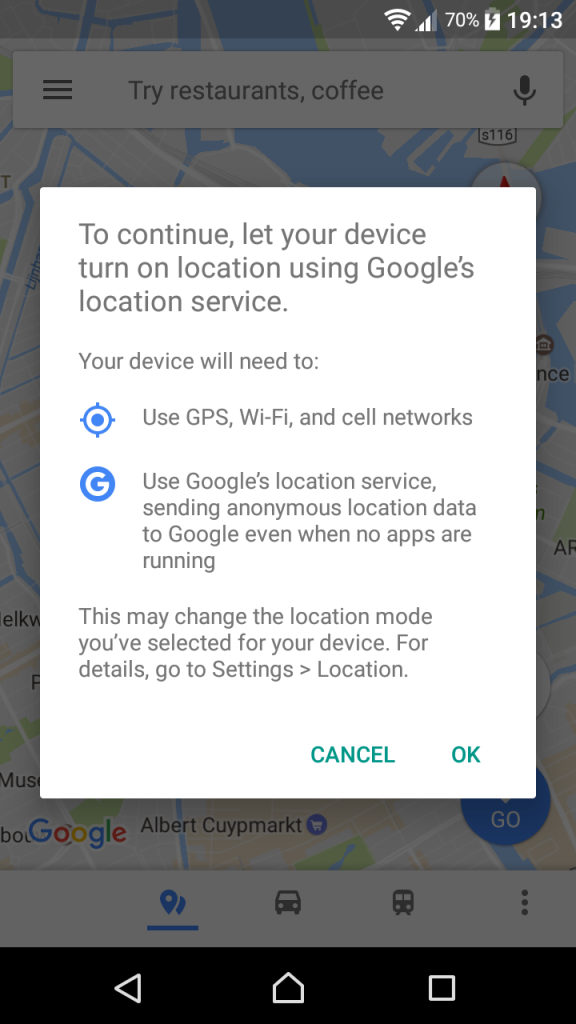

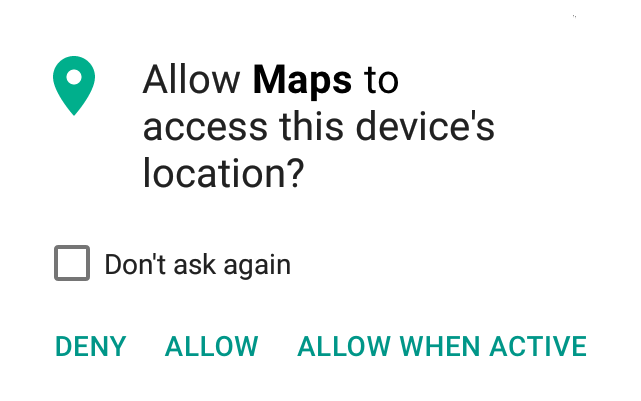

Another important aspect of user privacy is giving users better control. Fortunately, mobile operating systems have largely abandoned the old "take-it-or-leave-it" permissions approach, which required users to accept an X number of permissions when installing an app. For most apps, you can now select which permissions you agree with and which you don’t. Going further, Apple’s iOS allows developers to choose between an “always” or a “when using” permission when it comes to location information. However, the inclusion of such options still depends on the developer’s own choice; plus, they can’t be used for requests other than location data and such a choice is not (yet?) available on Android. This does not jell well with circumstances in which a user would be happy to give permission for accessing the camera, microphone or location data when using the app but are less comfortable with the app having constant access.

To address such concerns, should there be a way to only allow a permission when the app is running in the foreground, such as in the "limited permissions" mock-up below?

This is just one example of giving users more control. Should users also be able to restrict what times, locations or folders on their devices permissions apply to? What makes sense for both privacy protection and usability? I am keen to explore the depth of permission granularity with others interested in this topic. (In the meantime, check out the great permissions catalog that IF put together.)

Your social history on social networks

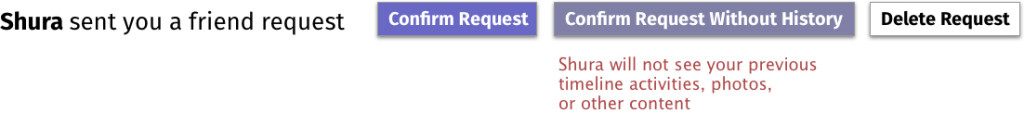

Controlling your own data extends to other digital experiences, as well. If you’re using social networks, you may have faced a tricky decision when someone you just met adds you as a connection.

You can choose to accept them and consequently share your full profile with them, or, if the network allows for it, prevent them from seeing specific types of activities, such as your photos or stories. But even if you may be perfectly happy sharing updates about your life with them from now on, having new friends perusing your years-long social archive may not make you comfortable. At the moment, however, options to make this distinction don’t exist. Is there a use for an option where you can start a new digital relationship on a clean slate (i.e., without them accessing your past activities)?

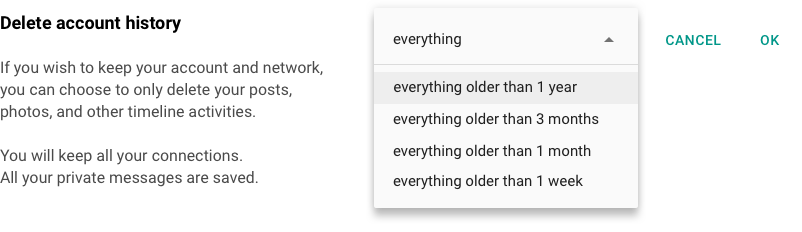

Along similar lines, social networks tend to offer you two choices for deleting past activities: You can delete content, such as posts or photos, item by item, or you can delete your entire account and lose your network as a consequence. But there is no easy way to delete (or make invisible) all your posts up to the present. Nor is there an option to delete everything except for, let’s say, your last month’s activities so that you can keep an interesting profile without carrying the burden of your past activities into your present-day interactions. Would there be a case to make for a deletion feature that offers such granularity?

Moving forward

Designers can help translate user needs into practice, and just like legal and policy experts, developers and especially users, they should be part of privacy discussions. I would like to test the ideas above and explore others, from good default settings to incognito windows, and from social logins to update notices. In the meantime, I would love to hear your feedback and collaborate to implement new privacy designs.

photo credit: akigabo Diversion? Decoration? Dehumanization? ... Done? via photopin(license)